最新下载

热门教程

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

PyTorch如何实现VGG16 PyTorch实现VGG16代码示例

时间:2020-06-24 编辑:袖梨 来源:一聚教程网

PyTorch如何实现VGG16?本篇文章小编给大家分享一下PyTorch实现VGG16代码示例,代码介绍的很详细,小编觉得挺不错的,现在分享给大家供大家参考,有需要的小伙伴们可以来看看。

代码如下:

import torch

import torch.nn as nn

import torch.nn.functional as F

class VGG16(nn.Module):

def __init__(self):

super(VGG16, self).__init__()

# 3 * 224 * 224

self.conv1_1 = nn.Conv2d(3, 64, 3) # 64 * 222 * 222

self.conv1_2 = nn.Conv2d(64, 64, 3, padding=(1, 1)) # 64 * 222* 222

self.maxpool1 = nn.MaxPool2d((2, 2), padding=(1, 1)) # pooling 64 * 112 * 112

self.conv2_1 = nn.Conv2d(64, 128, 3) # 128 * 110 * 110

self.conv2_2 = nn.Conv2d(128, 128, 3, padding=(1, 1)) # 128 * 110 * 110

self.maxpool2 = nn.MaxPool2d((2, 2), padding=(1, 1)) # pooling 128 * 56 * 56

self.conv3_1 = nn.Conv2d(128, 256, 3) # 256 * 54 * 54

self.conv3_2 = nn.Conv2d(256, 256, 3, padding=(1, 1)) # 256 * 54 * 54

self.conv3_3 = nn.Conv2d(256, 256, 3, padding=(1, 1)) # 256 * 54 * 54

self.maxpool3 = nn.MaxPool2d((2, 2), padding=(1, 1)) # pooling 256 * 28 * 28

self.conv4_1 = nn.Conv2d(256, 512, 3) # 512 * 26 * 26

self.conv4_2 = nn.Conv2d(512, 512, 3, padding=(1, 1)) # 512 * 26 * 26

self.conv4_3 = nn.Conv2d(512, 512, 3, padding=(1, 1)) # 512 * 26 * 26

self.maxpool4 = nn.MaxPool2d((2, 2), padding=(1, 1)) # pooling 512 * 14 * 14

self.conv5_1 = nn.Conv2d(512, 512, 3) # 512 * 12 * 12

self.conv5_2 = nn.Conv2d(512, 512, 3, padding=(1, 1)) # 512 * 12 * 12

self.conv5_3 = nn.Conv2d(512, 512, 3, padding=(1, 1)) # 512 * 12 * 12

self.maxpool5 = nn.MaxPool2d((2, 2), padding=(1, 1)) # pooling 512 * 7 * 7

# view

self.fc1 = nn.Linear(512 * 7 * 7, 4096)

self.fc2 = nn.Linear(4096, 4096)

self.fc3 = nn.Linear(4096, 1000)

# softmax 1 * 1 * 1000

def forward(self, x):

# x.size(0)即为batch_size

in_size = x.size(0)

out = self.conv1_1(x) # 222

out = F.relu(out)

out = self.conv1_2(out) # 222

out = F.relu(out)

out = self.maxpool1(out) # 112

out = self.conv2_1(out) # 110

out = F.relu(out)

out = self.conv2_2(out) # 110

out = F.relu(out)

out = self.maxpool2(out) # 56

out = self.conv3_1(out) # 54

out = F.relu(out)

out = self.conv3_2(out) # 54

out = F.relu(out)

out = self.conv3_3(out) # 54

out = F.relu(out)

out = self.maxpool3(out) # 28

out = self.conv4_1(out) # 26

out = F.relu(out)

out = self.conv4_2(out) # 26

out = F.relu(out)

out = self.conv4_3(out) # 26

out = F.relu(out)

out = self.maxpool4(out) # 14

out = self.conv5_1(out) # 12

out = F.relu(out)

out = self.conv5_2(out) # 12

out = F.relu(out)

out = self.conv5_3(out) # 12

out = F.relu(out)

out = self.maxpool5(out) # 7

# 展平

out = out.view(in_size, -1)

out = self.fc1(out)

out = F.relu(out)

out = self.fc2(out)

out = F.relu(out)

out = self.fc3(out)

out = F.log_softmax(out, dim=1)

return out

补充知识:Pytorch实现VGG(GPU版)

看代码吧~

import torch

from torch import nn

from torch import optim

from PIL import Image

import numpy as np

print(torch.cuda.is_available())

device = torch.device('cuda:0')

path="/content/drive/My Drive/Colab Notebooks/data/dog_vs_cat/"

train_X=np.empty((2000,224,224,3),dtype="float32")

train_Y=np.empty((2000,),dtype="int")

train_XX=np.empty((2000,3,224,224),dtype="float32")

for i in range(1000):

file_path=path+"cat."+str(i)+".jpg"

image=Image.open(file_path)

resized_image = image.resize((224, 224), Image.ANTIALIAS)

img=np.array(resized_image)

train_X[i,:,:,:]=img

train_Y[i]=0

for i in range(1000):

file_path=path+"dog."+str(i)+".jpg"

image = Image.open(file_path)

resized_image = image.resize((224, 224), Image.ANTIALIAS)

img = np.array(resized_image)

train_X[i+1000, :, :, :] = img

train_Y[i+1000] = 1

train_X /= 255

index = np.arange(2000)

np.random.shuffle(index)

train_X = train_X[index, :, :, :]

train_Y = train_Y[index]

for i in range(3):

train_XX[:,i,:,:]=train_X[:,:,:,i]

# 创建网络

class Net(nn.Module):

def __init__(self):

super(Net, self).__init__()

self.conv1 = nn.Sequential(

nn.Conv2d(in_channels=3, out_channels=64, kernel_size=3, stride=1, padding=1),

nn.ReLU(),

nn.Conv2d(in_channels=64, out_channels=64, kernel_size=3, stride=1, padding=1),

nn.ReLU(),

nn.BatchNorm2d(num_features=64, eps=1e-05, momentum=0.1, affine=True),

nn.MaxPool2d(kernel_size=2,stride=2)

)

self.conv2 = nn.Sequential(

nn.Conv2d(in_channels=64,out_channels=128,kernel_size=3,stride=1,padding=1),

nn.ReLU(),

nn.Conv2d(in_channels=128, out_channels=128, kernel_size=3, stride=1, padding=1),

nn.ReLU(),

nn.BatchNorm2d(128,eps=1e-5,momentum=0.1,affine=True),

nn.MaxPool2d(kernel_size=2,stride=2)

)

self.conv3 = nn.Sequential(

nn.Conv2d(in_channels=128, out_channels=256, kernel_size=3, stride=1, padding=1),

nn.ReLU(),

nn.Conv2d(in_channels=256, out_channels=256, kernel_size=3, stride=1, padding=1),

nn.ReLU(),

nn.Conv2d(in_channels=256, out_channels=256, kernel_size=3, stride=1, padding=1),

nn.ReLU(),

nn.BatchNorm2d(256,eps=1e-5, momentum=0.1, affine=True),

nn.MaxPool2d(kernel_size=2, stride=2)

)

self.conv4 = nn.Sequential(

nn.Conv2d(in_channels=256, out_channels=512, kernel_size=3, stride=1, padding=1),

nn.ReLU(),

nn.Conv2d(in_channels=512, out_channels=512, kernel_size=3, stride=1, padding=1),

nn.ReLU(),

nn.Conv2d(in_channels=512, out_channels=512, kernel_size=3, stride=1, padding=1),

nn.ReLU(),

nn.BatchNorm2d(512, eps=1e-5, momentum=0.1, affine=True),

nn.MaxPool2d(kernel_size=2, stride=2)

)

self.conv5 = nn.Sequential(

nn.Conv2d(in_channels=512, out_channels=512, kernel_size=3, stride=1, padding=1),

nn.ReLU(),

nn.Conv2d(in_channels=512, out_channels=512, kernel_size=3, stride=1, padding=1),

nn.ReLU(),

nn.Conv2d(in_channels=512, out_channels=512, kernel_size=3, stride=1, padding=1),

nn.ReLU(),

nn.BatchNorm2d(512, eps=1e-5, momentum=0.1, affine=True),

nn.MaxPool2d(kernel_size=2, stride=2)

)

self.dense1 = nn.Sequential(

nn.Linear(7*7*512,4096),

nn.ReLU(),

nn.Linear(4096,4096),

nn.ReLU(),

nn.Linear(4096,2)

)

def forward(self, x):

x=self.conv1(x)

x=self.conv2(x)

x=self.conv3(x)

x=self.conv4(x)

x=self.conv5(x)

x=x.view(-1,7*7*512)

x=self.dense1(x)

return x

batch_size=16

net = Net().to(device)

criterion = nn.CrossEntropyLoss()

optimizer = optim.Adam(net.parameters(), lr=0.0005)

train_loss = []

for epoch in range(10):

for i in range(2000//batch_size):

x=train_XX[i*batch_size:i*batch_size+batch_size]

y=train_Y[i*batch_size:i*batch_size+batch_size]

x = torch.from_numpy(x) #(batch_size,input_feature_shape)

y = torch.from_numpy(y) #(batch_size,label_onehot_shape)

x = x.cuda()

y = y.long().cuda()

out = net(x)

loss = criterion(out, y) # 计算两者的误差

optimizer.zero_grad() # 清空上一步的残余更新参数值

loss.backward() # 误差反向传播, 计算参数更新值

optimizer.step() # 将参数更新值施加到 net 的 parameters 上

train_loss.append(loss.item())

print(epoch, i*batch_size, np.mean(train_loss))

train_loss=[]

total_correct = 0

for i in range(2000):

x = train_XX[i].reshape(1,3,224,224)

y = train_Y[i]

x = torch.from_numpy(x)

x = x.cuda()

out = net(x).cpu()

out = out.detach().numpy()

pred=np.argmax(out)

if pred==y:

total_correct += 1

print(total_correct)

acc = total_correct / 2000.0

print('test acc:', acc)

torch.cuda.empty_cache()

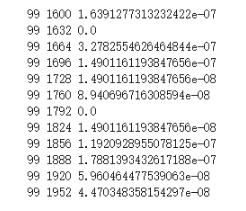

将上面代码中batch_size改为32,训练次数改为100轮,得到如下准确率

过拟合了~

相关文章

- Golang ProtoBuf的基本语法详解 10-20

- Python识别MySQL中的冗余索引解析 10-20

- Python+Pygame绘制小球代码展示 10-18

- Python中的数据精度问题介绍 10-18

- Python随机值生成的常用方法介绍 10-18

- python3解压缩.gz文件分析 09-27