最新下载

热门教程

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

tensorflow softmax函数如何用 tensorflow softmax函数用法解析

时间:2020-06-30 编辑:袖梨 来源:一聚教程网

tensorflow softmax函数如何用?本篇文章小编给大家分享一下tensorflow softmax函数用法解析,代码介绍的很详细,小编觉得挺不错的,现在分享给大家供大家参考,有需要的小伙伴们可以来看看。

如下所示:

def softmax(logits, axis=None, name=None, dim=None):

"""Computes softmax activations.

This function performs the equivalent of

softmax = tf.exp(logits) / tf.reduce_sum(tf.exp(logits), axis)

Args:

logits: A non-empty `Tensor`. Must be one of the following types: `half`,

`float32`, `float64`.

axis: The dimension softmax would be performed on. The default is -1 which

indicates the last dimension.

name: A name for the operation (optional).

dim: Deprecated alias for `axis`.

Returns:

A `Tensor`. Has the same type and shape as `logits`.

Raises:

InvalidArgumentError: if `logits` is empty or `axis` is beyond the last

dimension of `logits`.

"""

axis = deprecation.deprecated_argument_lookup("axis", axis, "dim", dim)

if axis is None:

axis = -1

return _softmax(logits, gen_nn_ops.softmax, axis, name)

softmax函数的返回结果和输入的tensor有相同的shape,既然没有改变tensor的形状,那么softmax究竟对tensor做了什么?

答案就是softmax会以某一个轴的下标为索引,对这一轴上其他维度的值进行 激活 + 归一化处理。

一般来说,这个索引轴都是表示类别的那个维度(tf.nn.softmax中默认为axis=-1,也就是最后一个维度)

举例:

def softmax(X, theta = 1.0, axis = None): """ Compute the softmax of each element along an axis of X. Parameters ---------- X: ND-Array. Probably should be floats. theta (optional): float parameter, used as a multiplier prior to exponentiation. Default = 1.0 axis (optional): axis to compute values along. Default is the first non-singleton axis. Returns an array the same size as X. The result will sum to 1 along the specified axis. """ # make X at least 2d y = np.atleast_2d(X) # find axis if axis is None: axis = next(j[0] for j in enumerate(y.shape) if j[1] > 1) # multiply y against the theta parameter, y = y * float(theta) # subtract the max for numerical stability y = y - np.expand_dims(np.max(y, axis = axis), axis) # exponentiate y y = np.exp(y) # take the sum along the specified axis ax_sum = np.expand_dims(np.sum(y, axis = axis), axis) # finally: divide elementwise p = y / ax_sum # flatten if X was 1D if len(X.shape) == 1: p = p.flatten() return p c = np.random.randn(2,3) print(c) # 假设第0维是类别,一共有里两种类别 cc = softmax(c,axis=0) # 假设最后一维是类别,一共有3种类别 ccc = softmax(c,axis=-1) print(cc) print(ccc)

结果:

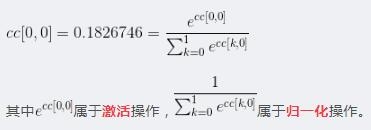

c: [[-1.30022268 0.59127472 1.21384177] [ 0.1981082 -0.83686108 -1.54785864]] cc: [[0.1826746 0.80661068 0.94057075] [0.8173254 0.19338932 0.05942925]] ccc: [[0.0500392 0.33172426 0.61823654] [0.65371718 0.23222472 0.1140581 ]]

可以看到,对axis=0的轴做softmax时,输出结果在axis=0轴上和为1(eg: 0.1826746+0.8173254),同理在axis=1轴上做的话结果的axis=1轴和也为1(eg: 0.0500392+0.33172426+0.61823654)。

这些值是怎么得到的呢?

以cc为例(沿着axis=0做softmax):

以ccc为例(沿着axis=1做softmax):

知道了计算方法,现在我们再来讨论一下这些值的实际意义:

cc[0,0]实际上表示这样一种概率: P( label = 0 | value = [-1.30022268 0.1981082] = c[*,0] ) = 0.1826746

cc[1,0]实际上表示这样一种概率: P( label = 1 | value = [-1.30022268 0.1981082] = c[*,0] ) = 0.8173254

ccc[0,0]实际上表示这样一种概率: P( label = 0 | value = [-1.30022268 0.59127472 1.21384177] = c[0]) = 0.0500392

ccc[0,1]实际上表示这样一种概率: P( label = 1 | value = [-1.30022268 0.59127472 1.21384177] = c[0]) = 0.33172426

ccc[0,2]实际上表示这样一种概率: P( label = 2 | value = [-1.30022268 0.59127472 1.21384177] = c[0]) = 0.61823654

将他们扩展到更多维的情况:假设c是一个[batch_size , timesteps, categories]的三维tensor

output = tf.nn.softmax(c,axis=-1)

那么 output[1, 2, 3] 则表示 P(label =3 | value = c[1,2] )

相关文章

- Golang ProtoBuf的基本语法详解 10-20

- Python识别MySQL中的冗余索引解析 10-20

- Python+Pygame绘制小球代码展示 10-18

- Python中的数据精度问题介绍 10-18

- Python随机值生成的常用方法介绍 10-18

- python3解压缩.gz文件分析 09-27